How we set up GPT-Neo (a GPT-3 Alternative)

For the upcoming Coding.Waterkant Hackathon we set up a server with GPT-Neo - an open-source text-generation model with similar performance to GPT-3.

For our upcoming Coding.Waterkant Hackathon we were looking for an alternative to OpenAI's GPT-3 and found GPT-Neo by EleutherAI, an implementation of model & data parallel GPT3-like models using the mesh-tensorflow library.

Since the launch of GPT-3 in 2020 many experiments with this new language model popped up in the tech and AI-scene. GPT-3 can produce text that is in many cases indistinguishable from human-written text. Because of the architecture of GPT-3 and the huge dataset it was trained on, it can be used to produce AI-written articles for specific topics, write and continue dialogues or even write code.

Unfortunately GPT-3 is a proprietary piece of software and access to the API is currently restricted and must be approved by OpenAI.

The open-source alternative GPT-Neo can be accessed and deployed by everyone on their own hardware. This makes it especially interesting for us to put the text-generation technology into the hands of many builders to see with what ideas they can come up with.

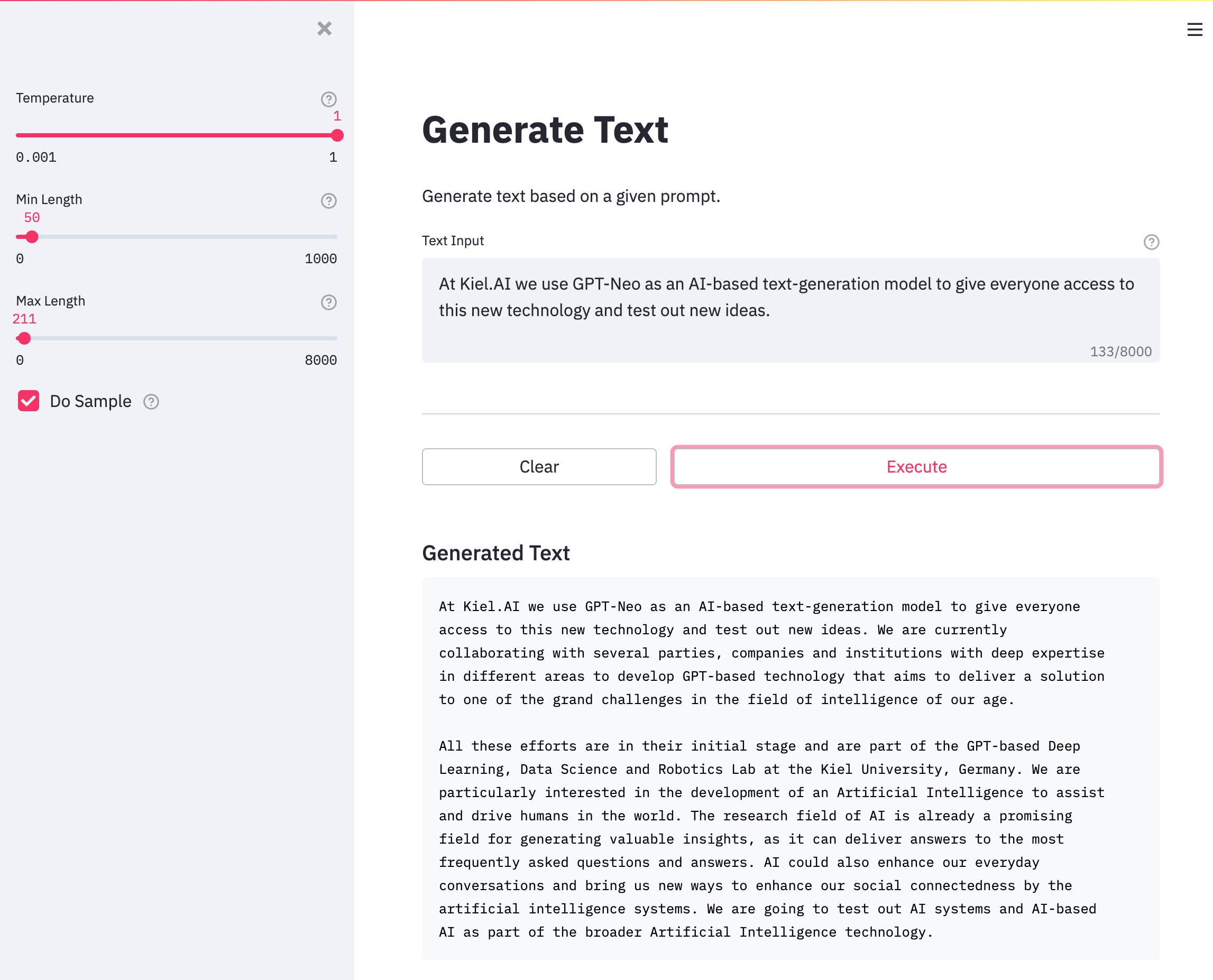

Using the open-source library Opyrator it was very easy for us to wrap a Rest-API as well as a Web-UI around GPT-Neo. We then deployed the UI and API on a GPU-instance (p2.xlarge) in the AWS cloud using Docker. You can also deploy the model on a server without an GPU but then inference-speed is much slower.

If you also want to deploy your GPT-Neo instance, feel free to clone, use and modify our code we provided in the projects Github repository.